Paper Producing CSDMS Compliant Code

E.W.H. Hutton, J.P.M. Syvitski & S.D. Peckham CSDMS Integration Facility, University of Colorado, Boulder CO, USA

Abstract

The Community Surface Dynamics Modeling System (CSDMS) provides cyber-infrastructure in aid of development, distribution, archiving, and integration of the suite of models that define Earth’s surface. This paper concentrates on the integration of models and guidelines to help modelers within the RCEM community create models to share within their community and within the broader Earth-surface modeling community. We describe how models integrate with each other within the Common Component Architecture (CCA) component-modeling framework and requirements for them. For models to be integrated within a component framework, they should expose an Initialize, Run, and Finalize interface, and be properly annotated to give metadata necessary for integration with other models. By refactoring existing models, and creating new models that follow this pattern, the community gains access to a large suite of standardized models. This allows the community to integrate models within new applications, compare and test models, and identify model overlap and gaps in knowledge.

Introduction

The Community Surface Dynamics Modeling System (CSDMS) develops, supports, and disseminates integrated software modules that predict the movement of fluids, and the flux (production, erosion, transport, and deposition) of sediment and solutes in landscapes and their sedimentary basins. CSDMS uses the software architecture CCA to allow components to be combined and integrated for enhanced functionality on high-performance computing (HPC) systems. CCA defines the standards necessary for the interoperation of components developed in the context of a “framework” — a software environment or infrastructure in which components can be linked to create applications (Bernholdt et al., 2006[1]). The CCA/CSDMS framework, Ccaffeine, provides a set of services for use in parallel computing that all components can access directly.

Bocca is a CCA development environment tool used as a comprehensive build environment for creating and managing applications composed of CCA components (Elwasif et al., 2007[2]). Bocca operates in a language-agnostic way by automatically invoking the Babel tool. Babel is a language interoperability compiler that automatically generates the glue code that is necessary for components written in different computer languages to communicate. It currently supports the following open-source languages: C, C++, Fortran (all years), Java and Python. Babel enables passing of variables with data types (e.g. objects, complex numbers) that may not normally be supported by the target language. Babel uses SIDL — Scientific Interface Definition Language whose sole purpose is to describe the interfaces (as opposed to implementations) of scientific model components. SIDL has a complete set of fundamental data types, from Booleans to double precision complex numbers. It also supports more sophisticated types such as enumerations, strings, objects, and dynamic multi-dimensional arrays.

CSDMS uses OpenMI (Open Modeling Interface) as its model interface standard — a standardized set of rules and supporting infrastructure for how a component must be written or refactored in order for it to more easily exchange data with other components that adhere to the same standard (Moore and Tindall 2005[3], Gregersen et al 2007[4]). Such a standard promotes interoperability between components developed by different teams across different institutions. Model components that comply with this standard can, without any programming, be configured to exchange data during computation (at run-time). The OpenMI standard supports two-way links where the involved models mutually depend on calculation results from each other. Linked models may run asynchronously with respect to time steps, and data represented on different geometries (grids) can be exchanged using built-in tools for interpolating in space and time.

As a community effort, CSDMS: 1) ensures continuity and project robustness in face of uncertain funding and institutional support; 2) cuts redundancy since with open modeling and open source code new models can be built upon already existing concepts, algorithms and code; 3) allows scientists to work with software engineers, helping to bridge the cultural and, often, institutional gap between these teams; and 4) offers transparency that promotes user participation, better testing, more robust models and more acceptance of the results. The RCEM community is encouraged to join and promote the CSDMS effort; to provide CSDMS with their code, documentation and test cases; to employ good software development practices that favor transparency, portability, and reusability; and to include procedures for version control, bug tracking, regression testing, and release maintenance.

The CCA Tool Chain

The Common Component Architecture (CCA) is a set of tools dedicated to bringing a plug-and-play style of programming to high performance scientific computing. CSDMS supports its application in the terrestrial, coastal, and marine modeling communities. Although the CCA tools are extensive, this section gives a brief introduction to three of the main tools of the CCA tool chain: babel, bocca, and the ccafe GUI.

Babel

Our modeling communities have generated a large number of useful standalone models. However, for the most part, model developers did not intend for these models to communicate with one another. Not surprisingly then, existing models were written in a range of programming languages. This language interoperability is a basic problem in trying to have a model communicate with another within a programming environment.

Babel is an open-source, language interoperability compiler that automatically generates glue code necessary to allow components written in different computer languages to communicate. It currently supports C, C++, Fortran (77, 90, 95 and 2003), Java and Python. Babel is more than a least common denominator solution; it enables passing of variables with data types that may not normally be supported by the target language (e.g. objects, complex numbers). Babel was designed to support scientific, high-performance computing and is one of the key tools in the CCA tool chain.

In order to create the glue code needed for two components written in different programming languages to communicate (or pass data between them), Babel needs only to be aware of the interfaces of the two components. It does not need to know anything about how the components have been implemented. Babel was designed to ingest a description of an interface in either of two fairly language neutral forms, XML (eXtensible Markup Language) or SIDL (Scientific Interface Definition Language). The SIDL language was developed for the Babel project. Its sole purpose is to provide a concise description of a scientific software component interface. This interface description includes complete information about a component's interface, such as the data types of all arguments and return values for each of the component's methods. SIDL has a complete set of fundamental data types to support scientific computing, from booleans to double precision complex numbers. It also supports more sophisticated data types such as enumerations, strings, objects, and dynamic multi-dimensional arrays.

Bocca

Bocca is a tool in the CCA tool chain designed to help create, edit and manage a set of CCA components and ports that are associated with a particular project. A model developer uses Bocca to prepare a set of CCA-compliant components and ports that can then be loaded into a CCA-compliant framework. Babel then compiles the linked components to create applications or composite models.

Bocca can be viewed as a development environment tool that allows application developers to perform rapid component prototyping while maintaining robust software- engineering practices suitable to HPC environments. Bocca provides project management and a comprehensive build environment for creating and managing applications composed of CCA components. Bocca operates in a language-agnostic way by automatically invoking the lower-level Babel tool. Bocca frees users from mundane, low-level tasks so they may focus on the scientific aspects of their applications. Bocca can be used interactively at a Unix command prompt or within shell scripts.

Ccafe-gui

Ccaffeine is one of many CCA-compliant frameworks for linking components, but it is the one used most often. There are at least three ways to use Ccaffeine,

- with a graphical user interface,

- at an interactive command prompt or

- with a Ccaffeine script.

The GUI (Ccafe-gui) is especially helpful for new users and for demonstrations and simple prototyping, while scripting is often faster for programmers and provides them with greater flexibility.

Ccafe-gui is easy to use. It consists of a palette of available components that are available for the user to make use of. The components may be stored locally or in a repository on a remote server. The user pulls components from the palette into an arena to be connected with one another. Once in the arena, an instance of the component is created and is ready to be connected with other compatible components.

Component boxes contain one or more ports that are labeled as a particular type of port. It is through these ports that models communicate and use the functionality of other components. Any two components that have the same port can be connected by clicking first on the port of one and then the same-named port of the other. A link connects these ports to indicate that the components are connected. In this way, a wiring diagram is constructed that describes the composite model. The user then clicks the start button of the base component to run the new model.

Model Interface Standards

A model should have two levels of interfaces: a user interface, and a programming interface. A user interface could be a graphical user interface (GUI), where a model user is able to control the model simulation through one or more graphical windows. A user interface could also be a command line interface (CLI) and is more common for models in our community. Oftentimes the user will create a set of input files and then run the program from the command line with a set of flags that controls the model execution.

Unlike the above interfaces, a programming interface is not seen by model users, but rather by model developers. A model’s application programming interface (API) gives a programmer an interface to the functionality of that model, and at the same time obscures the details of the model’s implementation. A good model API is essential in linking existing models within a new application.

Initialize, Run, Finalize (IRF)

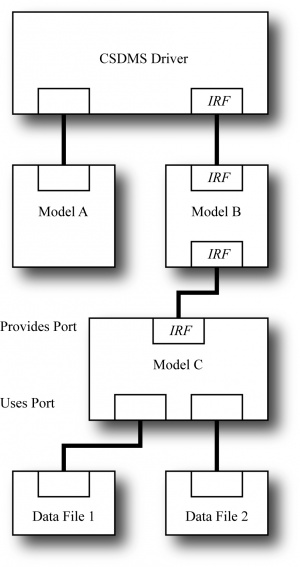

Many of the models that exist in our community do not have an extensive programming interface. This is most likely because their authors did not write them with the intent of having their model linked with other models. Typical surface dynamics models have a common structure, and so lend themselves well to a standard interface. In its basic form, this interface provides entry points into a model’s initialize, run, and finalize steps.

Most surface dynamics models advance values forward in time on a grid or mesh and have a similar internal structure. This structure consists of some lines of code before the beginning of a time loop (the initialize step), some lines of code inside the time loop (the run step) and finish with some additional lines after the end of the time loop (the finalize step). Virtually all component-based modeling efforts have recognized the utility of moving these lines of code into three separate functions, with names such as Initialize, Run and Finalize. This simple refactoring is an important first step towards allowing a model or process module to be used either as a component within a larger application or as a standalone model. It provides a calling program with fine-grained access to the model's capabilities and the ability to control the overall time stepping of the model.

Uses and Provides Ports

Within a general component framework, a component will have two types of connections with other models. These connections are made through ports that come in two varieties. Within the CCA framework, these ports are called, provides-ports, and uses-ports. The first provides an interface to the component’s own functionality. The second specifies a set of capabilities that the component will need to use from another component.

A provides-port presents to other components an interface that describes its functionality. That is, the functionality that it can provide to another component that lacks (but requires) that functionality. For instance, if a provides-port was to expose an IRF interface of the previous section, it would allow another component to gain access to its initialize, run, and finalize steps. Any interface can be exposed through a port, but it can only be connected to another port with a similar interface.

The uses-port of a component presents functionality that it lacks itself and therefore requires from another component. Any component that provides the required functionality is able to connect to it. Thus, the component is not able to function until it is connected to a component that has the required functionality. This allows a model developer to create a new model that uses the functionality of another component without having to know the details of that component or to even have that component exist at all.

This style of plug and play component programming benefits both model programmers and users. Within this framework model developers are able to create models within their areas own of expertise and rely on experts outside their field to fill in the gaps. Models that provide the same functionality can easily be compared to one another simply by unplugging one model and plugging in another, similar model. In this way users can easily conduct model comparisons and more simply build larger models from a series of components to solve new problems.

Annotations for Model Developers

Once a model has been written so that it provides entry points to its functionality (whether it be an IRF interface, or otherwise) it can be wrapped as a component to be used within a modeling framework (such as the CCA framework described above). The precise steps needed to do this depend on the framework. However, if the model contains sufficiently descriptive metadata, it can be easily imported into any modeling framework.

Because modeling frameworks may change over time, it is important to provide this type of metadata within a model so that it does not tie itself to any one framework. If a framework injects too much of itself into a model, the model becomes reliant on that framework. It should be that the framework relies on its set of models, not the other way around. To help with this problem, a key step when refactoring one’s model is to annotate the model source code so that the required metadata stays with the model. Using special keywords within comment blocks, a programmer is able to provide basic metadata for a model and its variables that is closely tied to the model but doesn’t affect how the model itself is written. For example, metadata for a variable could follow its declaration in a comment that describes its units, valid range of values and whether it is used for input or output. Another annotation could identify a particular function as being the model’s initialize, run, or finalize step. This type of annotation makes it possible to write utilities that parse the source code, extract the metadata and then automatically generate whatever component interface is required for compatibility with other models. In fact, this metadata could be automatically extracted and used for a wide range of purposes such as generating documentation, or providing an overview of the state of a community’s models.

Requirements for Code Contributors

Although this paper has focused primarily on the linking of models as components, this is only one goal of CSDMS. Of equal importance to CSDMS is to organize its community’s models. To this end, CSDMS seeks models of all types, and has few requirements for code contributors. We ask only two things: models are licensed under an open-source license, and that a form be filled out that gives basic metadata for the model.

Summary

CSDMS looks to its community for support and supports the community in return. Through a model repository CSDMS organizes models so that they have a home that is independent of the funding that built the model. The repository clearly presents the state of a community’s models and identifies areas of duplication as well as gaps in model coverage. The open-source nature of the repository gives transparency to what used to be black-box models. This allows better model testing and verification, faster development, and model acceptance.

The CSDMS modeling framework will provide the community with a set of model components with standard interfaces that can be linked with one another. This allows model developers to concentrate on writing models that they know best. Thus, the model user is certain that they are using that community’s best model, even though they may not be part of that community of experts.

References

- ↑ Bernholdt, D.E., Allan, B.A., Armstrong, R., Bertrand F., Chiu, K., Dahlgren, T.L., Damevski, K., Elwasif, W.R., Epperly, T.G.W., Govindaraju, M., Katz, D.S., Kohl, J.A., Krishnan, M., Kumfert, G., Larson, J.W., Lefantzi, S., Lewis, M.J., Malony, A.D., McInnes, L.C., Nieplocha, J., Norris, B., Parker, S.G., Ray, J., Shende, S., Windus, T.L. & Zhou, S. 2006. A component architecture for highperformance scientific computing. Intl. J. High Performance Computing Applications, ACTS Collection Special Issue, 20(2): 163-202.

- ↑ Elwasif, W., Norris, B., Allan, B. & Armstrong, R. 2007. Bocca: A development environment for HPC components. Proceedings of the 2007 symposium on component and framework technology in high-performance and scientific computing, Montreal, Canada, Association for Computing Machinery, New York: 21-30.

- ↑ Moore, R.V & Tindall, I. 2005. An Overview of the Open Modelling Interface and Environment (the OpenMI). Environmental Science & Policy, 8: 279-286.

- ↑ Gregersen, J.B., Gijsbers, P.J.A. & Westen, S.J.P. 2007. OpenMI : Open Modeling Interface. Journal of Hydroinformatics. 9(3): 175-191.